A few years ago I spend a lot of time converting individual GAR (from Corsica) into team GAR, which is the sum of each player contribution. The data was flawed because I couldn’t differentiate between playoff and regular season, and if a player was traded in season I couldn’t decipher how he performed for each team. Therefore, I had to make a lot of estimations, but even with imperfect data the results were encouraging.

Now it’s a thousand times easier as evolving-hockey.com has team GAR listed directly. You have to be a Patreon to see it, but if you have a few bucks to spare, it’s worth supporting their great work.

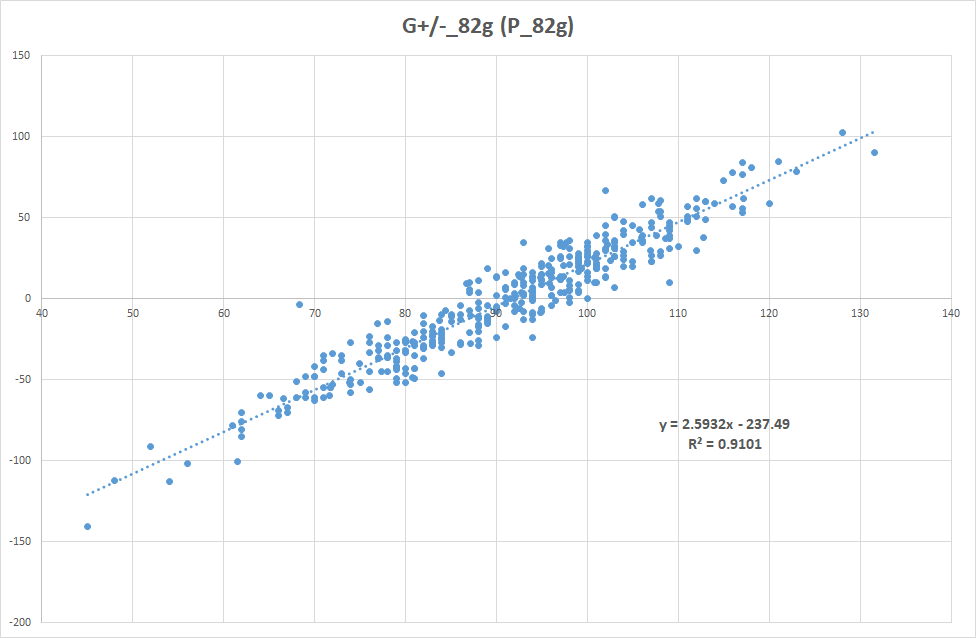

Again I’m using team data, so that I can see how well it correlates GF%. So how well does goal differential and standings points actually correlate. Here’s G+/- as a function of points. I have used 82 game pace – otherwise the shortened season and this one would skew the results.

I wanted to show two things: First I wanted to show that there’s a good correlation between goal differential and winning. This seems obvious – If you outscore your opponents you often win. The other thing I wanted to show, was that every point is worth about 2.6 goals. This is a good thing to have in mind later on if you want to convert GAR into WAR.

I have also switched it around to show that an average team in the NHL gets about 91.6 points. In a perfect world where every game is worth the same amount of points, the average would be exactly 82 points (with 2 point games) or 123 points (with 3 point games). But that’s another discussion for another time.

With that out of the way, let’s turn our attention towards evolving-hockey’s GAR and xGAR models. I consider them two unrelated models and we shall later see, that they both have great descriptive value. In other words, they can both explain past results quite well.

If you study the models on a player level, you will find some differences. Generally, GAR values defenders more than xGAR and especially defensive defensemen are valued differently. Brooks Orpik is good example of this. According to xGAR he has been one the worst defenders in the league, when we look at his career numbers. GAR on the other hand thinks he has been an above average defenseman. I don’t know if one model is better than the other. They are just slightly different.

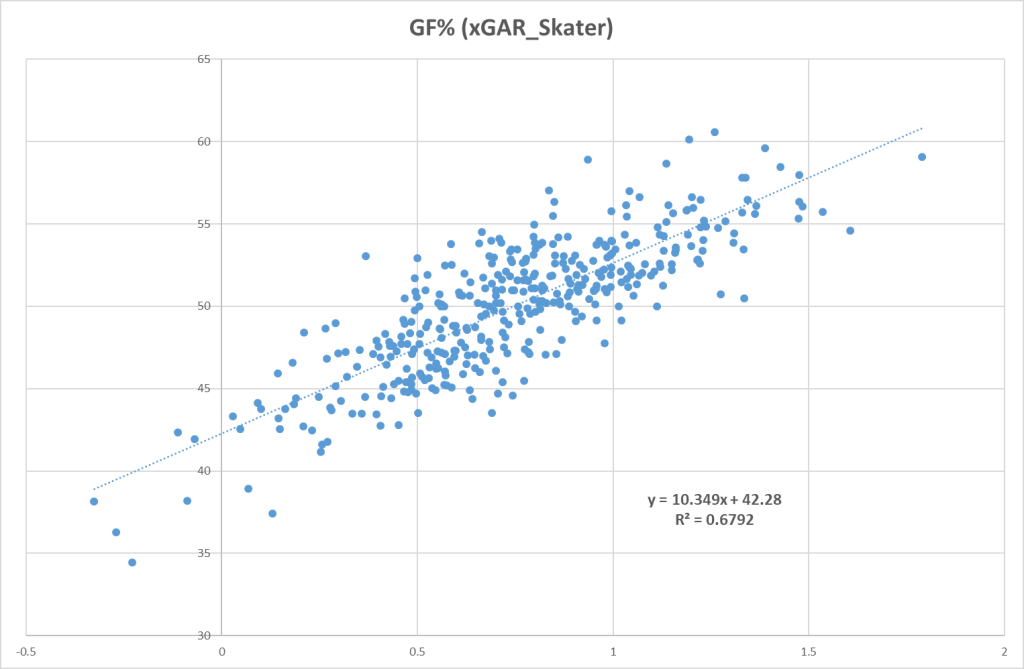

Let’s start with the GAR model. At first I will ignore the goalies, so here’s GF% plotted as a function of team Skater GAR.

The correlation (R-squared = 0.631) with GF% is better than it was for corsi and expected goals, so this bodes well for when we adjust for goaltending.

I did the same thing for the xGAR model, and the result is similar although slightly better.

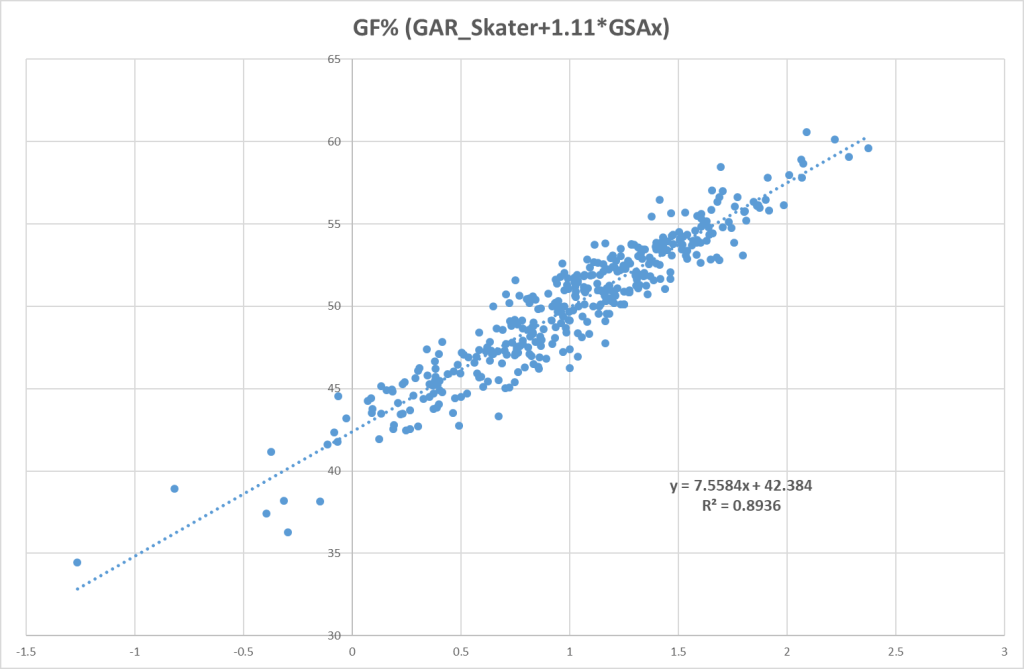

The next step is to add the goalies impact. I could use Team GAR Goalie, but it turns out that goals saved above expected (GSAx) works better. By refining the graph, I found that if you multiply GSAx with 1.11, you will get the best result. Here’s the graph:

This shows that GAR Skater combined with GSAx correlates very well team quality. This is interesting.

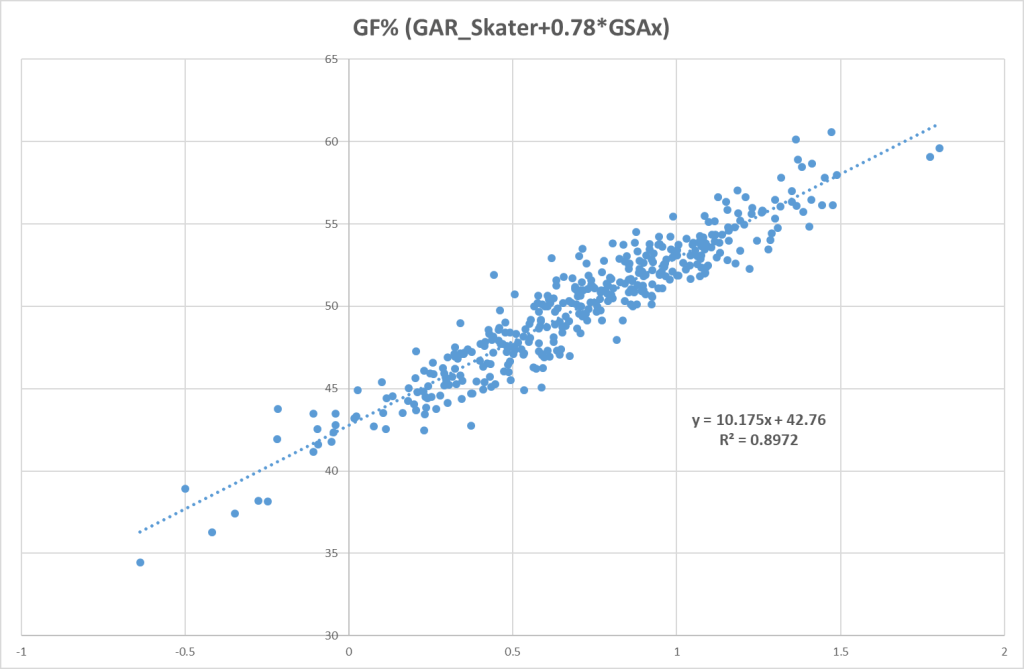

I also did this for xGAR, but then GSAx should only be multiplied by 0.78. This may sound strange, but it’s because Team GAR Skater > Team xGAR Skater by a factor 1.42. This fact is also important later on, when I combine the two models.

Here’s the graph of Team xGAR Skater combined with GSAx.

It’s a similar result as the GAR model, so I would say both models can explain past results well.

So far so good. The next thing I want to do, is to combine the two models into one. There’s a few different ways to do this, but I will spare you the details for now. I’ve called the model LS-GAR, and it combines GAR Skater, xGAR Skater and GSAx. Here it is:

As hoped the combined model works even better than GAR and xGAR individually. The final step in this already way too long and nerdy blog, is to make some adjustments to make the model more user friendly. I will skip over the calculations, but I have done two things:

- I have made it an average model, meaning if the team is at 0, it is now an average team (bubble playoff team) instead of a replacement team. The model has therefore changed from a GAR model to a GAA model (goals above average).

- The second thing I have done is to make sure one goal in my model actually equals one goal in team goal differential. This way we can compare LS-GAA directly to goal differential.

Here’s how it looks, and I have used 82 game pace.

These adjustments make LS-GAA a lot easier to interpret, since we can now compare LS-GAA and goal differential directly. So in the next I blog I will look at the numbers in greater detail. Up until now we have only looked at the bigger picture (the macro level). The next step is to look at and compare teams (the meso level), before we finally turn our attention to the individual players (the micro level).

Conclusion:

- Both GAR and xGAR are great descriptive models.

- A point in the standings is worth about 2.6 goals.

- I have made a model (LS-GAA) using GAR and xGAR as the basis. Theoretically LS-GAA equals goal differential.

- So far I have only looked at Team LS-GAA, but considering how well it explains results, it’s likely also an interesting stat down on the player level.

Stay safe and remember to be kind

All stats from evolving-hockey.com

I apologize for not getting back to you sooner. I am behind in my readings.

I’ve read all your blogs to date now. I have some questions for each, but wanted to start with this one, as all the rest are based on this model.

You gloss over how you combine GAR, xGAR, and GSAx to create your final LS-GAR, which you then refine to LS-GAA.

I’m not too interested in the final step, but I would like to know how you combined the three indicators to create the final product LS-GAR, and what other methods were available, and if you tried them to see if they gave you a more accurate model?

Have you made the model publicly available yet? You latest blog seems to indicate that it is not. But I may have misread something as I read them all in one sitting and will have to return to each one separately when I get a chance.

LikeLike

That’s super cool… I didn’t think anyone would go back and read all the blogs in one reading. I’ve evovlved quite a bit since the first blogs and changed some things along the way. In the original LS-GAR model I just multiplied the xGAR components (except the penalty components) with a factor, so that the contributions from each model are the same. So it was just: ((GAR + 1.42*xGAR) / 2) + GAR_PEN

In the newest version of the model (sGAA) I have fitted Evolving-Hockey’s models individually. So I refined the graphs G+/- (GAA) and G+/- (xGAA) to give the highest correlation. Then I just summed the the two models and divided by 2. I made sure GSAx and the penalty components had the same weight in both the GAA and xGAA refinements.

In the end, the difference between the two approaches isn’t that great, but I prefer the newest method. I want the model to be able to describe team performance as well as possible.

You can find the complete sGAA model here: https://hockeystatisticscom.files.wordpress.com/2020/08/sgaa.xlsx

In this version I have also adjusted for rink bias. Just be careful not to overinterpret the individual player data. In a sport like Hockey it’s impossible to completely isolate playerperformance.

LikeLike